When people talk about AI infrastructure, the conversation usually starts in familiar places.

The software stack.

The compute stack.

The models.

The GPUs.

The race for more tokens, more performance, more scale.

But beneath all of that sits a layer that is becoming impossible to ignore.

> The Thermal Stack

And as AI data centers evolve into what many now call AI factories, this hidden layer is quickly becoming one of the most important determinants of performance.

Cooling Enables Compute Performance

There’s a simple way to think about modern AI infrastructure:

Cooling enables compute performance, which unlocks AI capabilities.

That idea may sound obvious, but the industry has not always treated it that way.

For years, thermal management was often seen as a supporting function.The real innovation was assumed to be in chips, models, software frameworks, and networking fabrics.

That made sense when thermal systems could comfortably keep pace with compute.

But that is no longer the world we are in.

Today’s AI data centers are pushing into an entirely different class of power density. As GPUs become more powerful and racks become more densely packed, every performance gain also creates a thermal consequence. Every watt of compute ultimately becomes heat. And that heat has to be removed, quickly and efficiently, or performance suffers.

That is why the thermal stack is emerging as a foundational layer of AI infrastructure.

The Three Stacks of AI Data Center Performance

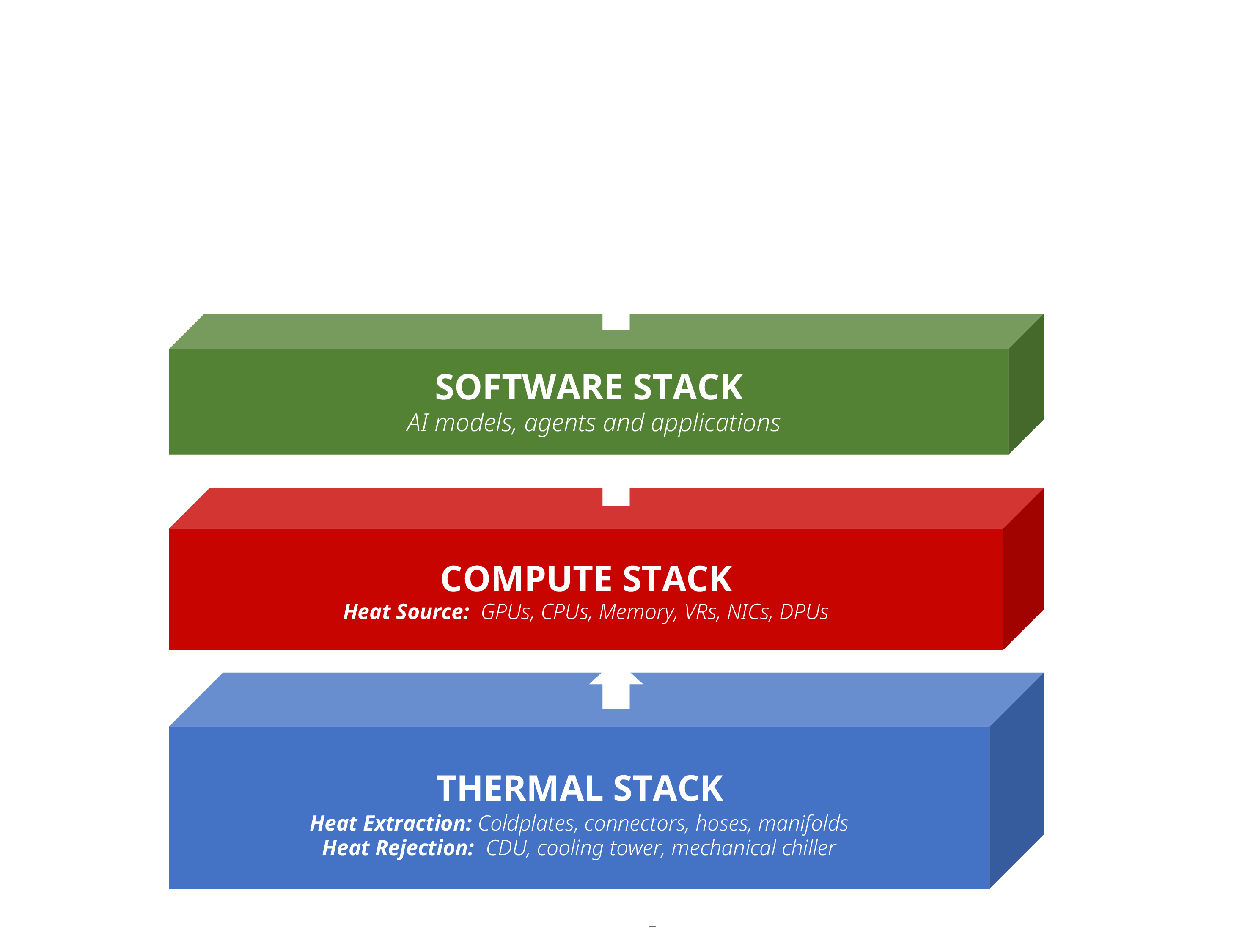

At a high level, AI infrastructure can be thought of as three interdependent layers.

1. The Software Stack

This is the layer most people see first:

AI models, agents, and applications.

This is where intelligence is expressed.

2. The Compute Stack

This is the hardware layer:

GPUs, CPUs, memory, NICs, DPUs, voltage regulators, and the surrounding compute architecture.

This is where performance is generated.

3. The Thermal Stack

This is the infrastructure that makes the compute stack sustainable:

The systems responsible for extracting heat from silicon and rejecting it into the environment.

This is where performance is preserved.

The relationship between these layers matters.

The software stack depends on the compute stack.

The compute stack depends on the thermal stack.

In that sense, the thermal stack is not just another supporting system. It is a performance-enabling foundation.

Why the Thermal Stack Matters Now

The reason this conversation is becoming urgent is simple: AI has changed the scale of the thermal problem.

Modern AI systems are no longer conventional server environments. They are increasingly being designed as highly dense, compute systems optimized for massive parallel workloads. In these systems, performance is not just about the quality of the chip. It is also about how many high-performance devices can be packed into the same rack, operating at high speed, in close proximity, with low-latency interconnects.

That’s where thermal architecture becomes critical.

If heat cannot be removed efficiently, several things happen:

- components run hotter

- performance drops

- efficiency declines

- reliability risk rises

- rack density is constrained

- infrastructure cost climbs

This is why thermal architecture should no longer be thought of as an afterthought. It is becoming one of the key enablers of AI factory design.

What Is the Thermal Stack?

At its simplest, the thermal stack includes two major functions:

Heat Extraction

This is the set of technologies that pull heat away from the compute components themselves.

Examples include:

- coldplates

- thermal interfaces

- connectors

- hoses

- manifolds

This is where heat is first captured from GPUs, CPUs, memory, networking,and power electronics.

Heat Rejection

This is the infrastructure that removes that captured heat from the system and ultimately rejects it to the atmosphere.

Examples include:

- CDUs

- cooling towers

- facility cooling systems

- mechanical chillers

Both matter. But as AI systems get denser, the heat extraction layer is becoming especially important. Removing heat efficiently at the component and tray level can dramatically improve the overall performance envelope of the system.

Density Is Now a Feature

One of the biggest shifts in AI infrastructure is that density is no longer something to avoid — it is something to unlock.

The closer high-performance devices can operate together in a rack, the more efficiently they can communicate, share data, and behave like a larger unified system. That creates enormous value in AI workloads.

But density only works if thermal systems can keep up.

This is why innovation in the thermal stack can directly affect compute density, rack architecture, and ultimately AI throughput.

In other words:

Better thermal architecture doesn’t just cool a rack. It can redefine how much performance a rack can deliver.

The Next Frontier of AI Infrastructure

The industry has spent years optimizing the software stack and scaling the compute stack.

Now the next frontier is becoming clear.

The thermal stack is where a significant amount of future infrastructure innovation will happen — not just because systems need cooling, but because thermal design increasingly governs the practical limits of compute.

That includes:

- how densely systems can be built

- how efficiently they run

- how reliably they operate

- how much energy and facility overhead they require

- how much real-world AI performance they can sustain

As AI factories continue to scale, the companies that think deeply about thermal architecture will have an advantage.

The Thermal Stack - A Hidden Layer - But Not for Much Longer

For a long time, thermal infrastructure has been one of the least discussed layers of computing.

That is changing.

As the AI era pushes the physical limits of power, density, and performance, the thermal stack is moving into the spotlight. It is becoming more visible, more strategic, and more central to the future of AI infrastructure.

The software stack may define intelligence.

The compute stack may generate performance.

But the thermal stack is increasingly what makes that performance possible.

And in the age of AI factories, that hidden layer may turn out to be one of the most important layers of all.